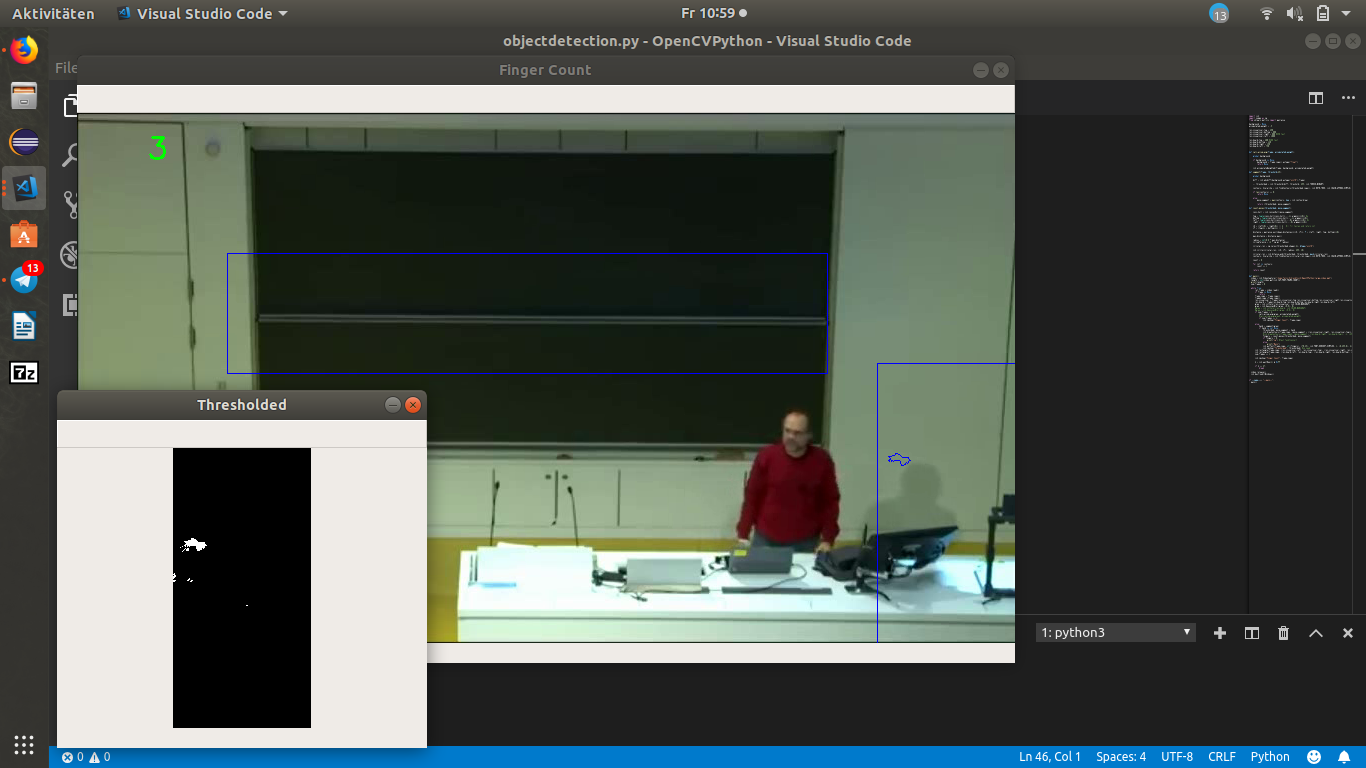

Ziel: es soll erkannt werden wenn die Person sich in einem der beiden Region of Interest s befindet (die Person muss nicht komplett drin stehen)

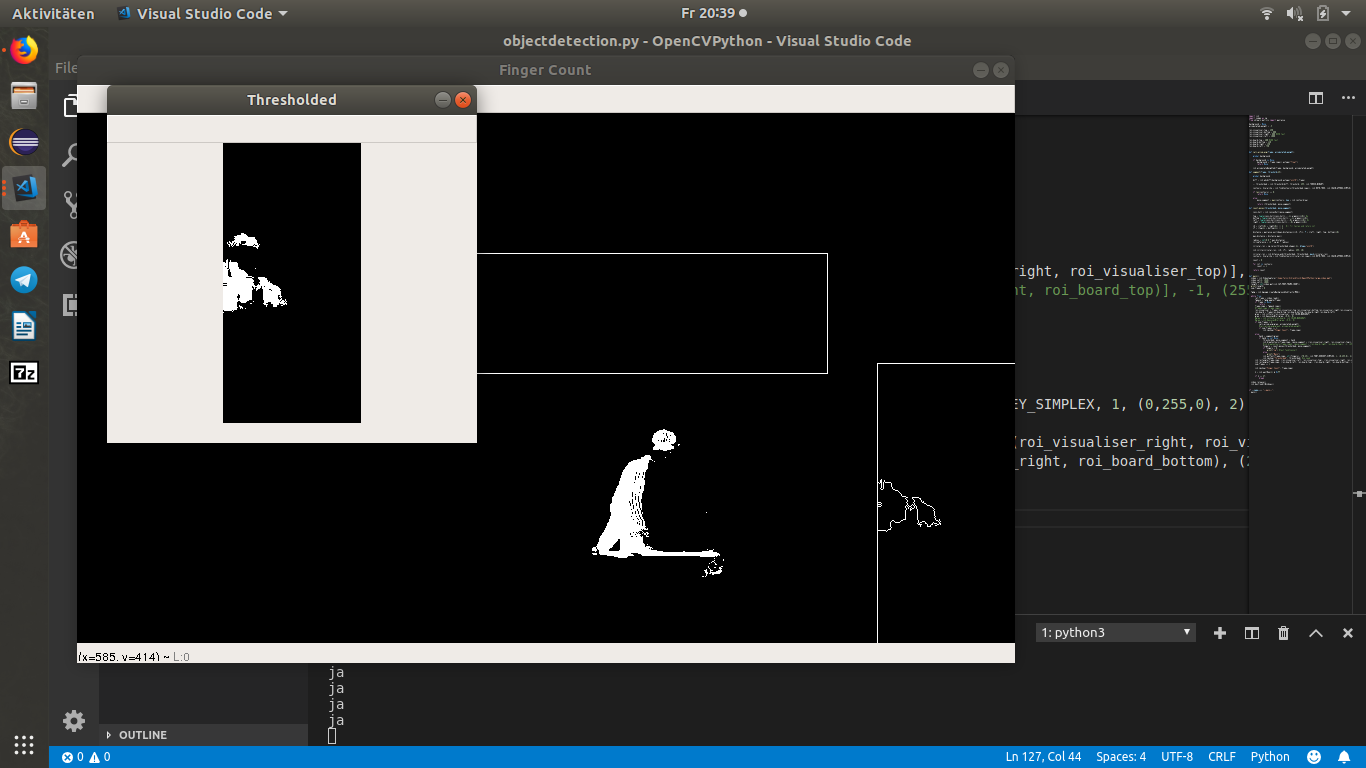

Das funktioniert soweit auch allerdings wird selbst sein Schatten erkannt was natürlich nicht sein soll.

Hat da jmd ein Tipp für mich oder eine alternative Idee ?

Code:

Code: Alles auswählen

import cv2

import numpy as np

from sklearn.metrics import pairwise

background = None

accumulated_weight = -5

roi_visualiser_top = 250

roi_visualiser_bottom = 600

roi_visualiser_right = 800 #350 test

roi_visualiser_left = 1000

roi_board_top = 260 #400 test

roi_board_bottom = 140

roi_board_right = 150

roi_board_left = 750

def calc_accum_avg(frame, accumulated_weight):

global background

if background is None:

background = frame.copy().astype("float")

return None

cv2.accumulateWeighted(frame, background, accumulated_weight)

def segment(frame, threshold=25):

global background

diff = cv2.absdiff(background.astype("uint8"),frame)

_, thresholded = cv2.threshold(diff, threshold, 255, cv2.THRESH_BINARY)

contours, hierarchy = cv2.findContours(thresholded.copy(), cv2.RETR_TREE, cv2.CHAIN_APPROX_SIMPLE)

if len(contours) == 0:

return None

else:

move_segment = max(contours, key = cv2.contourArea)

return (thresholded, move_segment)

def count_moves(thresholded, move_segment):

conv_hull = cv2.convexHull(move_segment)

top = tuple(conv_hull[conv_hull[:,:,1].argmin()][0]) #y

bottom = tuple(conv_hull[conv_hull[:,:,1].argmax()][0])

left = tuple(conv_hull[conv_hull[:,:,0].argmin()][0]) #x

right = tuple(conv_hull[conv_hull[:,:,0].argmax()][0])

cX = (left[0] + right[0]) // 2 #// für teilen und return int

cY = (top[1] + bottom[1]) // 2

distance = pairwise.euclidean_distances([(cX, cY)], Y = [left, right, top, bottom])[0]

max_distance = distance.max()

radius = int(0.8 * max_distance)

circumference = (2 * np.pi * radius)

circular_roi = np.zeros(thresholded.shape[:2], dtype="uint8")

cv2.circle(circular_roi, (cX, cY), radius, 255, 10)

circular_roi = cv2.bitwise_and(thresholded, thresholded, mask=circular_roi)

contours, hierarchy = cv2.findContours(circular_roi.copy(),cv2.RETR_TREE, cv2.CHAIN_APPROX_SIMPLE)

count = 0

for cnt in contours:

count += 1

return count

def main():

video = cv2.VideoCapture("/home/felix/Schreibtisch/OpenCVPython/large_video.mp4")

length = int(video.get(cv2.CAP_PROP_FRAME_COUNT))

print(length)

num_frames = 0

while True:

ret,frame = video.read()

if frame is None:

return

frame_copy = frame.copy()

frame2_copy =frame.copy()

roi_visualiser = frame[roi_visualiser_top:roi_visualiser_bottom,roi_visualiser_right:roi_visualiser_left]

roi_board = frame[roi_board_top:roi_board_bottom,roi_board_right:roi_board_left]

gray = cv2.cvtColor(roi_visualiser, cv2.COLOR_BGR2GRAY)

gray = cv2.GaussianBlur(gray, (9,9), 0)

#gray = cv2.cvtColor(roi_board, cv2.COLOR_BGR2GRAY)

#gray = cv2.GaussianBlur(gray, (9,9), 0)

if num_frames < 2:

calc_accum_avg(gray, accumulated_weight)

#calc_accum_avg(gray2, accumulated_weight)

if num_frames <= 1:

cv2.imshow("Finger Count", frame_copy)

else:

hand = segment(gray)

if hand is not None:

thresholded, move_segment = hand

cv2.drawContours(frame_copy, [move_segment + (roi_visualiser_right, roi_visualiser_top)], -1, (255,0,0), 1)

#cv2.drawContours(frame_copy2, [move_segment + (roi_board_right, roi_board_top)], -1, (255,0,0), 1)

fingers = count_moves(thresholded, move_segment)

if fingers > 0:

print("ja") #test funktioniert

else:

print("Nein")

cv2.putText(frame_copy, str(fingers), (70,45), cv2.FONT_HERSHEY_SIMPLEX, 1, (0,255,0), 2) #no need

cv2.imshow("Thresholded", thresholded) #no need

cv2.rectangle(frame_copy, (roi_visualiser_left, roi_visualiser_top), (roi_visualiser_right, roi_visualiser_bottom), (255,0,0), 1)

cv2.rectangle(frame_copy, (roi_board_left, roi_board_top), (roi_board_right, roi_board_bottom), (255,0,0), 1)

num_frames += 1

cv2.imshow("Finger Count", frame_copy)

k = cv2.waitKey(1) & 0xFF

if k == 27:

break

video.release()

cv2.destroyAllWindows()

if __name__== "__main__":

main()